Processing of industrial IoT data streams

Streaming industrial IoT data can be complicated to process, because it often involves a large amount of data, received in continuous streams that never end. There’s never one single machine to monitor. Always a number of them. Multiple buildings, one or more factories, a fleet of ships, all of an operator’s subsea oil pipelines. That kind of scale.

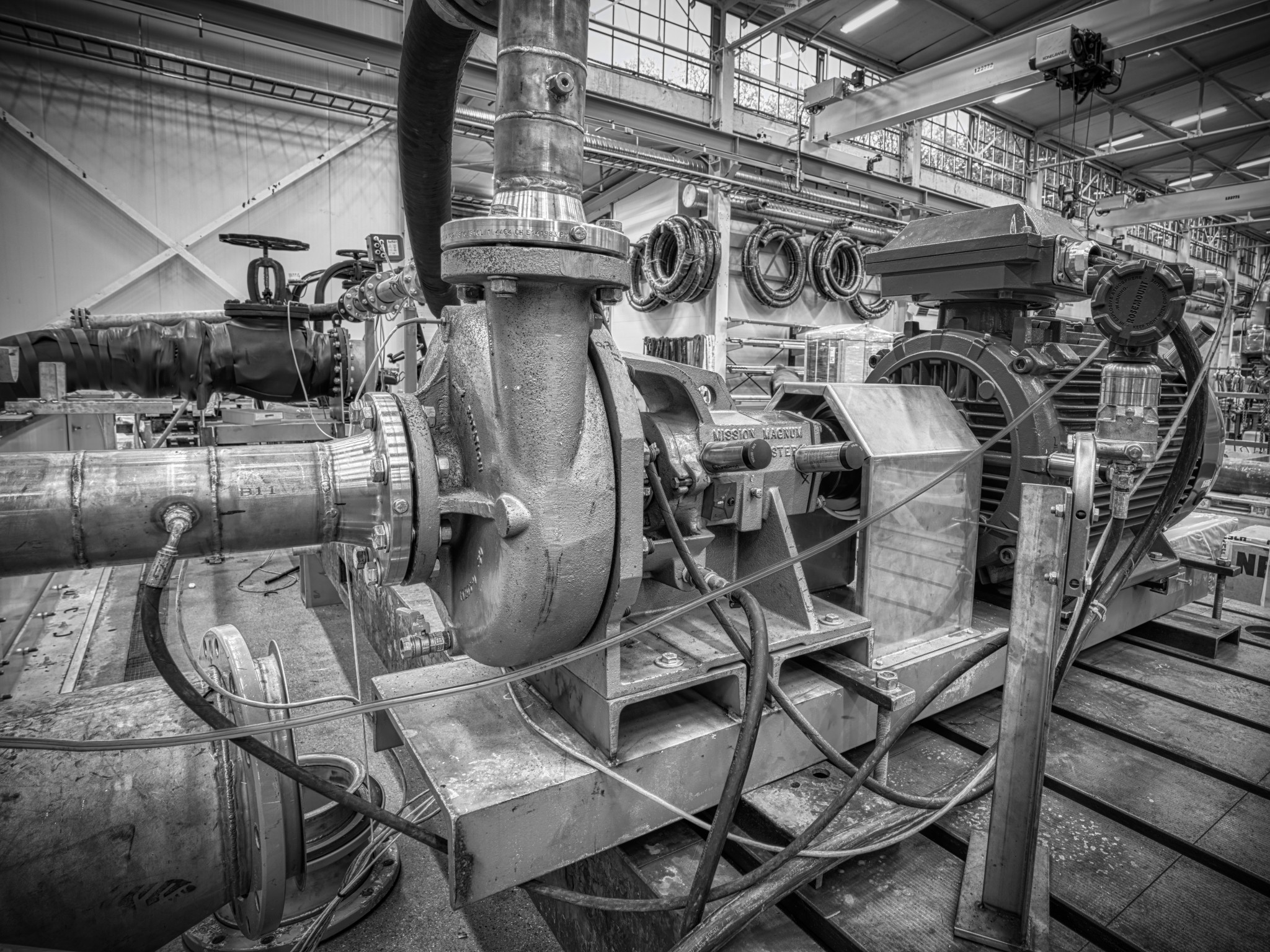

Industrial pump photo by Christian Egeberg

IoT data may need to be buffered for multiple weeks, if your sensors and equipment are at sea. They may need to be transferred to the cloud when the ship is in a harbour, or the data may be brought to shore on removable disks. IoT data streams don’t have to be transmitted in real time to provide value. Though, sometimes you’ll want to perform at least parts of the processing on site. For example optimization of fuel consumption for tankers and tug boats, or detection of anomalies for subsea oil pumps.

It’s perfectly possible to run trained machine learning algorithms on quite primitive equipment, like relatives of Raspberry PI, or a small rugged PC. Maybe directly on your IoT gateway, that in some cases can be as powerful as any server blade. You also won’t need a lot of machines to be able to deploy a reasonably redundant Kubernetes cluster, even if your architectural choices become much more limited than they would have been with a cloud provider.

As a Data Scientist, you’ll often find that just observing the values that stream by doesn’t give you enough context to perform complex calculations. You may need a cache of recent data, or a place to store partial precalculations, aggregates, and potentially the output of machine learning models, typically for use in dashboards and interactive parameterized reports. I’ll call this data cache warm storage.

Warm storage is often used used by Data Science applications, and algorithms that call machine learning models, in order to quickly retrieve sufficient sensor values and state changes from streaming data. Warm Storage data may typically be used to detect anomalies, generate warnings, or calculate Key Performance Indicators, that add insight and value to streaming data for customers.

Those KPIs are often aggregated to minute, ten minute, hour or day averages, and stored back into warm storage, with a longer retention period, to facilitate reuse by further calculations. The stored partial aggregates may also be used for presentation purposes, typically by dashboards, or in some cases reports with selectable parameters. Thus optimal retention periods may vary quite a bit between tenants and use cases.

A good Warm Storage needs flexible low latency querying and filtering for multiple tags simultaneously. It must be able to relate to hierarchical tag IDs, representing an asset hierarchy, for flexible matching and filtering. It needs storage support for KPIs and aggregates calculated from within algorithms that may optionally call machine learning models. It needs query support for seamlessly mixing calculated data, sensor data and state changes.

An often overlooked topic is that Warm Storage should be opt out by default, not opt in. Every insert into Warm Storage will cost you a little bit of performance, storage space, and memory space for indexes, before it is removed as obsolete. All data that is scanned through, as part of index scans during queries, will also cost you a little bit of io, memory and cpu, every time you run a query. Tenants that have no intention to use Warm Storage should not have their data stored there. Neither should tags that will never be retrieved from Warm Storage. The first rule of warm storage is: Don’t store data you won’t need. Excess data affects the performance of retrieving the data you want, costs more money, and brings you closer to a need for scaling out.

A good Warm Storage should have a Python wrapper, to make it easier to use from Data Science projects. If you’re using Warm Storage in a resource restricted environment, the API should behave the same as in a cloud environment.

I’ll get into the nitty gritty details of all this in the video presentation I made while preparing for the Knowit Developer Summit 2020. It’s about real world experience with building and deploying industrial IoT warm storage solutions during the last couple of years. I’ll talk about some of the existing storage components on the market, strategies for how to utilize them effectively, some of the unexpected problems we encountered on our way, and what we learnt from it. I’ll describe the warm storage solution we’re currently using in production, including the lazy aggregator, and a rule engine with fully configurable aggregating input selectors, powerful expression parsing, machine learning model integration, plus customizable outputs and alerts. Check out the video, if any of this sounds interesting, and please tell me what you think about it in the response section.

Processing of Industrial IoT data streams: Presentation about how to utilize rapidly streaming sensor values for more than just trivial value limit alerts. Parts strategies, architecture, temporary storage, precalculation, aggregation, configurable rules, machine learning model integration, performance and scaling. Based on real world project experience during the last couple of years.